Public Member Functions | |

| def | __init__ (self, *ConvertRepoTask task, str root, Instrument instrument, Optional[str] run, Optional[ConversionSubset] subset=None) |

| bool | isDatasetTypeSpecial (self, str datasetTypeName) |

| Iterator[Tuple[str, CameraMapperMapping]] | iterMappings (self) |

| RepoWalker.Target | makeRepoWalkerTarget (self, str datasetTypeName, str template, Dict[str, type] keys, StorageClass storageClass, FormatterParameter formatter=None, Optional[PathElementHandler] targetHandler=None) |

| List[str] | getSpecialDirectories (self) |

| def | prep (self) |

| Iterator[FileDataset] | iterDatasets (self) |

| def | findDatasets (self) |

| def | expandDataIds (self) |

| def | ingest (self) |

| None | finish (self) |

| str | getRun (self, str datasetTypeName, Optional[str] calibDate=None) |

Public Attributes | |

| task | |

| root | |

| instrument | |

| subset | |

Detailed Description

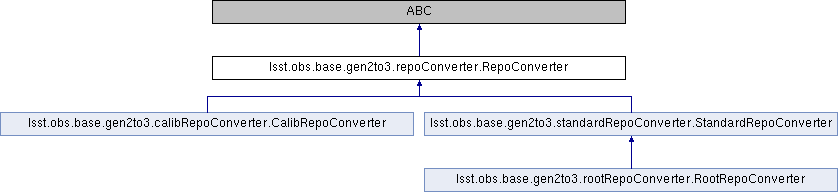

An abstract base class for objects that help `ConvertRepoTask` convert

datasets from a single Gen2 repository.

Parameters

----------

task : `ConvertRepoTask`

Task instance that is using this helper object.

root : `str`

Root of the Gen2 repo being converted. Will be converted to an

absolute path, resolving symbolic links and ``~``, if necessary.

instrument : `Instrument`

Gen3 instrument class to use for this conversion.

collections : `list` of `str`

Gen3 collections with which all converted datasets should be

associated.

subset : `ConversionSubset, optional

Helper object that implements a filter that restricts the data IDs that

are converted.

Notes

-----

`RepoConverter` defines the only public API users of its subclasses should

use (`prep` `ingest`, and `finish`). These delegate to several abstract

methods that subclasses must implement. In some cases, subclasses may

reimplement the public methods as well, but are expected to delegate to

``super()`` either at the beginning or end of their own implementation.

Definition at line 180 of file repoConverter.py.

Constructor & Destructor Documentation

◆ __init__()

| def lsst.obs.base.gen2to3.repoConverter.RepoConverter.__init__ | ( | self, | |

| *ConvertRepoTask | task, | ||

| str | root, | ||

| Instrument | instrument, | ||

| Optional[str] | run, | ||

| Optional[ConversionSubset] | subset = None |

||

| ) |

Definition at line 209 of file repoConverter.py.

Member Function Documentation

◆ expandDataIds()

| def lsst.obs.base.gen2to3.repoConverter.RepoConverter.expandDataIds | ( | self | ) |

Expand the data IDs for all datasets to be inserted. Subclasses may override this method, but must delegate to the base class implementation if they do. This involves queries to the registry, but not writes. It is guaranteed to be called between `findDatasets` and `ingest`.

Definition at line 441 of file repoConverter.py.

◆ findDatasets()

| def lsst.obs.base.gen2to3.repoConverter.RepoConverter.findDatasets | ( | self | ) |

Definition at line 424 of file repoConverter.py.

◆ finish()

| None lsst.obs.base.gen2to3.repoConverter.RepoConverter.finish | ( | self | ) |

Finish conversion of a repository. This is run after ``ingest``, and delegates to `_finish`, which should be overridden by derived classes instead of this method.

Definition at line 511 of file repoConverter.py.

◆ getRun()

| str lsst.obs.base.gen2to3.repoConverter.RepoConverter.getRun | ( | self, | |

| str | datasetTypeName, | ||

| Optional[str] | calibDate = None |

||

| ) |

Return the name of the run to insert instances of the given dataset

type into in this collection.

Parameters

----------

datasetTypeName : `str`

Name of the dataset type.

calibDate : `str`, optional

If not `None`, the "CALIBDATE" associated with this (calibration)

dataset in the Gen2 data repository.

Returns

-------

run : `str`

Name of the `~lsst.daf.butler.CollectionType.RUN` collection.

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, lsst.obs.base.gen2to3.rootRepoConverter.RootRepoConverter, and lsst.obs.base.gen2to3.calibRepoConverter.CalibRepoConverter.

Definition at line 535 of file repoConverter.py.

◆ getSpecialDirectories()

| List[str] lsst.obs.base.gen2to3.repoConverter.RepoConverter.getSpecialDirectories | ( | self | ) |

Return a list of directory paths that should not be searched for

files.

These may be directories that simply do not contain datasets (or

contain datasets in another repository), or directories whose datasets

are handled specially by a subclass.

Returns

-------

directories : `list` [`str`]

The full paths of directories to skip, relative to the repository

root.

Reimplemented in lsst.obs.base.gen2to3.rootRepoConverter.RootRepoConverter.

Definition at line 292 of file repoConverter.py.

◆ ingest()

| def lsst.obs.base.gen2to3.repoConverter.RepoConverter.ingest | ( | self | ) |

Insert converted datasets into the Gen3 repository. Subclasses may override this method, but must delegate to the base class implementation at some point in their own logic. This method is guaranteed to be called after `expandDataIds`.

Definition at line 483 of file repoConverter.py.

◆ isDatasetTypeSpecial()

| bool lsst.obs.base.gen2to3.repoConverter.RepoConverter.isDatasetTypeSpecial | ( | self, | |

| str | datasetTypeName | ||

| ) |

Test whether the given dataset is handled specially by this

converter and hence should be ignored by generic base-class logic that

searches for dataset types to convert.

Parameters

----------

datasetTypeName : `str`

Name of the dataset type to test.

Returns

-------

special : `bool`

`True` if the dataset type is special.

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, lsst.obs.base.gen2to3.rootRepoConverter.RootRepoConverter, and lsst.obs.base.gen2to3.calibRepoConverter.CalibRepoConverter.

Definition at line 221 of file repoConverter.py.

◆ iterDatasets()

| Iterator[FileDataset] lsst.obs.base.gen2to3.repoConverter.RepoConverter.iterDatasets | ( | self | ) |

Iterate over datasets in the repository that should be ingested into

the Gen3 repository.

The base class implementation yields nothing; the datasets handled by

the `RepoConverter` base class itself are read directly in

`findDatasets`.

Subclasses should override this method if they support additional

datasets that are handled some other way.

Yields

------

dataset : `FileDataset`

Structures representing datasets to be ingested. Paths should be

absolute.

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, and lsst.obs.base.gen2to3.rootRepoConverter.RootRepoConverter.

Definition at line 405 of file repoConverter.py.

◆ iterMappings()

| Iterator[Tuple[str, CameraMapperMapping]] lsst.obs.base.gen2to3.repoConverter.RepoConverter.iterMappings | ( | self | ) |

Iterate over all `CameraMapper` `Mapping` objects that should be

considered for conversion by this repository.

This this should include any datasets that may appear in the

repository, including those that are special (see

`isDatasetTypeSpecial`) and those that are being ignored (see

`ConvertRepoTask.isDatasetTypeIncluded`); this allows the converter

to identify and hence skip these datasets quietly instead of warning

about them as unrecognized.

Yields

------

datasetTypeName: `str`

Name of the dataset type.

mapping : `lsst.obs.base.mapping.Mapping`

Mapping object used by the Gen2 `CameraMapper` to describe the

dataset type.

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, and lsst.obs.base.gen2to3.calibRepoConverter.CalibRepoConverter.

Definition at line 239 of file repoConverter.py.

◆ makeRepoWalkerTarget()

| RepoWalker.Target lsst.obs.base.gen2to3.repoConverter.RepoConverter.makeRepoWalkerTarget | ( | self, | |

| str | datasetTypeName, | ||

| str | template, | ||

| Dict[str, type] | keys, | ||

| StorageClass | storageClass, | ||

| FormatterParameter | formatter = None, |

||

| Optional[PathElementHandler] | targetHandler = None |

||

| ) |

Make a struct that identifies a dataset type to be extracted by

walking the repo directory structure.

Parameters

----------

datasetTypeName : `str`

Name of the dataset type (the same in both Gen2 and Gen3).

template : `str`

The full Gen2 filename template.

keys : `dict` [`str`, `type`]

A dictionary mapping Gen2 data ID key to the type of its value.

storageClass : `lsst.daf.butler.StorageClass`

Gen3 storage class for this dataset type.

formatter : `lsst.daf.butler.Formatter` or `str`, optional

A Gen 3 formatter class or fully-qualified name.

targetHandler : `PathElementHandler`, optional

Specialist target handler to use for this dataset type.

Returns

-------

target : `RepoWalker.Target`

A struct containing information about the target dataset (much of

it simplify forwarded from the arguments).

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, and lsst.obs.base.gen2to3.calibRepoConverter.CalibRepoConverter.

Definition at line 261 of file repoConverter.py.

◆ prep()

| def lsst.obs.base.gen2to3.repoConverter.RepoConverter.prep | ( | self | ) |

Perform preparatory work associated with the dataset types to be converted from this repository (but not the datasets themselves). Notes ----- This should be a relatively fast operation that should not depend on the size of the repository. Subclasses may override this method, but must delegate to the base class implementation at some point in their own logic. More often, subclasses will specialize the behavior of `prep` by overriding other methods to which the base class implementation delegates. These include: - `iterMappings` - `isDatasetTypeSpecial` - `getSpecialDirectories` - `makeRepoWalkerTarget` This should not perform any write operations to the Gen3 repository. It is guaranteed to be called before `ingest`.

Reimplemented in lsst.obs.base.gen2to3.standardRepoConverter.StandardRepoConverter, and lsst.obs.base.gen2to3.rootRepoConverter.RootRepoConverter.

Definition at line 308 of file repoConverter.py.

Member Data Documentation

◆ instrument

| lsst.obs.base.gen2to3.repoConverter.RepoConverter.instrument |

Definition at line 213 of file repoConverter.py.

◆ root

| lsst.obs.base.gen2to3.repoConverter.RepoConverter.root |

Definition at line 212 of file repoConverter.py.

◆ subset

| lsst.obs.base.gen2to3.repoConverter.RepoConverter.subset |

Definition at line 214 of file repoConverter.py.

◆ task

| lsst.obs.base.gen2to3.repoConverter.RepoConverter.task |

Definition at line 211 of file repoConverter.py.

The documentation for this class was generated from the following file:

- /j/snowflake/release/lsstsw/stack/0.2.1/Linux64/obs_base/21.0.0-27-gbbd0d29+ae871e0f33/python/lsst/obs/base/gen2to3/repoConverter.py