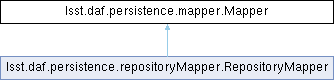

Inheritance diagram for lsst.daf.persistence.mapper.Mapper:

Public Member Functions | |

| def | __new__ (cls, *args, **kwargs) |

| def | __init__ (self, **kwargs) |

| def | __getstate__ (self) |

| def | __setstate__ (self, state) |

| def | keys (self) |

| def | queryMetadata (self, datasetType, format, dataId) |

| def | getDatasetTypes (self) |

| def | map (self, datasetType, dataId, write=False) |

| def | canStandardize (self, datasetType) |

| def | standardize (self, datasetType, item, dataId) |

| def | validate (self, dataId) |

| def | backup (self, datasetType, dataId) |

| def | getRegistry (self) |

Static Public Member Functions | |

| def | Mapper (cfg) |

Detailed Description

Mapper is a base class for all mappers.

Subclasses may define the following methods:

map_{datasetType}(self, dataId, write)

Map a dataset id for the given dataset type into a ButlerLocation.

If write=True, this mapping is for an output dataset.

query_{datasetType}(self, key, format, dataId)

Return the possible values for the format fields that would produce

datasets at the granularity of key in combination with the provided

partial dataId.

std_{datasetType}(self, item)

Standardize an object of the given data set type.

Methods that must be overridden:

keys(self)

Return a list of the keys that can be used in data ids.

Other public methods:

__init__(self)

getDatasetTypes(self)

map(self, datasetType, dataId, write=False)

queryMetadata(self, datasetType, key, format, dataId)

canStandardize(self, datasetType)

standardize(self, datasetType, item, dataId)

validate(self, dataId)

Constructor & Destructor Documentation

◆ Mapper()

|

static |

Instantiate a Mapper from a configuration.

In come cases the cfg may have already been instantiated into a Mapper, this is allowed and

the input var is simply returned.

:param cfg: the cfg for this mapper. It is recommended this be created by calling

Mapper.cfg()

:return: a Mapper instance

Definition at line 71 of file mapper.py.

71 def Mapper(cfg):

72 '''Instantiate a Mapper from a configuration.

73 In come cases the cfg may have already been instantiated into a Mapper, this is allowed and

74 the input var is simply returned.

75

76 :param cfg: the cfg for this mapper. It is recommended this be created by calling

77 Mapper.cfg()

78 :return: a Mapper instance

79 '''

80 if isinstance(cfg, Policy):

81 return cfg['cls'](cfg)

82 return cfg

83

◆ __init__()

| def lsst.daf.persistence.mapper.Mapper.__init__ | ( | self, | |

| ** | kwargs | ||

| ) |

Reimplemented in lsst.daf.persistence.repositoryMapper.RepositoryMapper.

Definition at line 100 of file mapper.py.

100 def __init__(self, **kwargs):

101 pass

102

Member Function Documentation

◆ __getstate__()

| def lsst.daf.persistence.mapper.Mapper.__getstate__ | ( | self | ) |

◆ __new__()

| def lsst.daf.persistence.mapper.Mapper.__new__ | ( | cls, | |

| * | args, | ||

| ** | kwargs | ||

| ) |

Create a new Mapper, saving arguments for pickling. This is in __new__ instead of __init__ to save the user from having to save the arguments themselves (either explicitly, or by calling the super's __init__ with all their *args,**kwargs. The resulting pickling system (of __new__, __getstate__ and __setstate__ is similar to how __reduce__ is usually used, except that we save the user from any responsibility (except when overriding __new__, but that is not common).

Definition at line 84 of file mapper.py.

84 def __new__(cls, *args, **kwargs):

85 """Create a new Mapper, saving arguments for pickling.

86

87 This is in __new__ instead of __init__ to save the user

88 from having to save the arguments themselves (either explicitly,

89 or by calling the super's __init__ with all their

90 *args,**kwargs. The resulting pickling system (of __new__,

91 __getstate__ and __setstate__ is similar to how __reduce__

92 is usually used, except that we save the user from any

93 responsibility (except when overriding __new__, but that

94 is not common).

95 """

96 self = super().__new__(cls)

97 self._arguments = (args, kwargs)

98 return self

99

◆ __setstate__()

| def lsst.daf.persistence.mapper.Mapper.__setstate__ | ( | self, | |

| state | |||

| ) |

◆ backup()

| def lsst.daf.persistence.mapper.Mapper.backup | ( | self, | |

| datasetType, | |||

| dataId | |||

| ) |

Rename any existing object with the given type and dataId. Not implemented in the base mapper.

Definition at line 191 of file mapper.py.

191 def backup(self, datasetType, dataId):

192 """Rename any existing object with the given type and dataId.

193

194 Not implemented in the base mapper.

195 """

196 raise NotImplementedError("Base-class Mapper does not implement backups")

197

◆ canStandardize()

| def lsst.daf.persistence.mapper.Mapper.canStandardize | ( | self, | |

| datasetType | |||

| ) |

Return true if this mapper can standardize an object of the given dataset type.

Definition at line 167 of file mapper.py.

167 def canStandardize(self, datasetType):

168 """Return true if this mapper can standardize an object of the given

169 dataset type."""

170

171 return hasattr(self, 'std_' + datasetType)

172

◆ getDatasetTypes()

| def lsst.daf.persistence.mapper.Mapper.getDatasetTypes | ( | self | ) |

◆ getRegistry()

| def lsst.daf.persistence.mapper.Mapper.getRegistry | ( | self | ) |

◆ keys()

| def lsst.daf.persistence.mapper.Mapper.keys | ( | self | ) |

◆ map()

| def lsst.daf.persistence.mapper.Mapper.map | ( | self, | |

| datasetType, | |||

| dataId, | |||

write = False |

|||

| ) |

Map a data id using the mapping method for its dataset type.

Parameters

----------

datasetType : string

The datasetType to map

dataId : DataId instance

The dataId to use when mapping

write : bool, optional

Indicates if the map is being performed for a read operation

(False) or a write operation (True)

Returns

-------

ButlerLocation or a list of ButlerLocation

The location(s) found for the map operation. If write is True, a

list is returned. If write is False a single ButlerLocation is

returned.

Raises

------

NoResults

If no locaiton was found for this map operation, the derived mapper

class may raise a lsst.daf.persistence.NoResults exception. Butler

catches this and will look in the next Repository if there is one.

Definition at line 137 of file mapper.py.

137 def map(self, datasetType, dataId, write=False):

138 """Map a data id using the mapping method for its dataset type.

139

140 Parameters

141 ----------

142 datasetType : string

143 The datasetType to map

144 dataId : DataId instance

145 The dataId to use when mapping

146 write : bool, optional

147 Indicates if the map is being performed for a read operation

148 (False) or a write operation (True)

149

150 Returns

151 -------

152 ButlerLocation or a list of ButlerLocation

153 The location(s) found for the map operation. If write is True, a

154 list is returned. If write is False a single ButlerLocation is

155 returned.

156

157 Raises

158 ------

159 NoResults

160 If no locaiton was found for this map operation, the derived mapper

162 catches this and will look in the next Repository if there is one.

163 """

164 func = getattr(self, 'map_' + datasetType)

165 return func(self.validate(dataId), write)

166

◆ queryMetadata()

| def lsst.daf.persistence.mapper.Mapper.queryMetadata | ( | self, | |

| datasetType, | |||

| format, | |||

| dataId | |||

| ) |

Get possible values for keys given a partial data id. :param datasetType: see documentation about the use of datasetType :param key: this is used as the 'level' parameter :param format: :param dataId: see documentation about the use of dataId :return:

Definition at line 114 of file mapper.py.

114 def queryMetadata(self, datasetType, format, dataId):

115 """Get possible values for keys given a partial data id.

116

117 :param datasetType: see documentation about the use of datasetType

118 :param key: this is used as the 'level' parameter

119 :param format:

120 :param dataId: see documentation about the use of dataId

121 :return:

122 """

123 func = getattr(self, 'query_' + datasetType)

124

125 val = func(format, self.validate(dataId))

126 return val

127

◆ standardize()

| def lsst.daf.persistence.mapper.Mapper.standardize | ( | self, | |

| datasetType, | |||

| item, | |||

| dataId | |||

| ) |

Standardize an object using the standardization method for its data set type, if it exists.

Definition at line 173 of file mapper.py.

173 def standardize(self, datasetType, item, dataId):

174 """Standardize an object using the standardization method for its data

175 set type, if it exists."""

176

177 if hasattr(self, 'std_' + datasetType):

178 func = getattr(self, 'std_' + datasetType)

179 return func(item, self.validate(dataId))

180 return item

181

◆ validate()

| def lsst.daf.persistence.mapper.Mapper.validate | ( | self, | |

| dataId | |||

| ) |

Validate a dataId's contents. If the dataId is valid, return it. If an invalid component can be transformed into a valid one, copy the dataId, fix the component, and return the copy. Otherwise, raise an exception.

Definition at line 182 of file mapper.py.

182 def validate(self, dataId):

183 """Validate a dataId's contents.

184

185 If the dataId is valid, return it. If an invalid component can be

186 transformed into a valid one, copy the dataId, fix the component, and

187 return the copy. Otherwise, raise an exception."""

188

189 return dataId

190

The documentation for this class was generated from the following file:

- /j/snowflake/release/lsstsw/stack/lsst-scipipe-5.0.1/Linux64/daf_persistence/g17e5ecfddb+50a5ac4092/python/lsst/daf/persistence/mapper.py