Public Member Functions | |

| def | __init__ (self, butler=None, schema=None, refObjLoader=None, reuse=tuple(), **kwargs) |

| def | __reduce__ (self) |

| def | batchWallTime (cls, time, parsedCmd, numCpus) |

| Return walltime request for batch job. More... | |

| def | runDataRef (self, patchRefList) |

| Run multiband processing on coadds. More... | |

| def | runDetection (self, cache, patchRef) |

| Run detection on a patch. More... | |

| def | runMergeDetections (self, cache, dataIdList) |

| Run detection merging on a patch. More... | |

| def | runDeblendMerged (self, cache, dataIdList) |

| def | runMeasurements (self, cache, dataId) |

| def | runMergeMeasurements (self, cache, dataIdList) |

| Run measurement merging on a patch. More... | |

| def | runForcedPhot (self, cache, dataId) |

| Run forced photometry on a patch for a single filter. More... | |

| def | writeMetadata (self, dataRef) |

| def | parseAndRun (cls, *args, **kwargs) |

| def | parseAndRun (cls, args=None, config=None, log=None, doReturnResults=False) |

| def | parseAndSubmit (cls, args=None, **kwargs) |

| def | batchCommand (cls, args) |

| Return command to run CmdLineTask. More... | |

| def | logOperation (self, operation, catch=False, trace=True) |

| Provide a context manager for logging an operation. More... | |

| def | applyOverrides (cls, config) |

| def | writeConfig (self, butler, clobber=False, doBackup=True) |

| def | writeSchemas (self, butler, clobber=False, doBackup=True) |

| def | writePackageVersions (self, butler, clobber=False, doBackup=True, dataset="packages") |

| def | emptyMetadata (self) |

| def | getSchemaCatalogs (self) |

| def | getAllSchemaCatalogs (self) |

| def | getFullMetadata (self) |

| def | getFullName (self) |

| def | getName (self) |

| def | getTaskDict (self) |

| def | makeSubtask (self, name, **keyArgs) |

| def | timer (self, name, logLevel=Log.DEBUG) |

| def | makeField (cls, doc) |

Public Attributes | |

| butler | |

| reuse | |

| measurementInput | |

| deblenderOutput | |

| coaddType | |

| metadata | |

| log | |

| config | |

Static Public Attributes | |

| ConfigClass = MultiBandDriverConfig | |

| RunnerClass = MultiBandDriverTaskRunner | |

| bool | canMultiprocess = True |

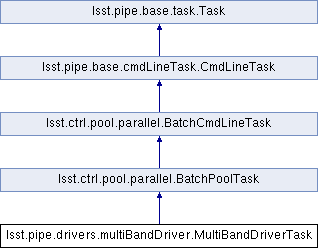

Detailed Description

Multi-node driver for multiband processing

Definition at line 95 of file multiBandDriver.py.

Constructor & Destructor Documentation

◆ __init__()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.__init__ | ( | self, | |

butler = None, |

|||

schema = None, |

|||

refObjLoader = None, |

|||

reuse = tuple(), |

|||

| ** | kwargs | ||

| ) |

- Parameters

-

[in] butler the butler can be used to retrieve schema or passed to the refObjLoader constructor in case it is needed. [in] schema the schema of the source detection catalog used as input. [in] refObjLoader an instance of LoadReferenceObjectsTasks that supplies an external reference catalog. May be None if the butler argument is provided or all steps requiring a reference catalog are disabled.

Definition at line 101 of file multiBandDriver.py.

Member Function Documentation

◆ __reduce__()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.__reduce__ | ( | self | ) |

Pickler

Reimplemented from lsst.pipe.base.task.Task.

Definition at line 148 of file multiBandDriver.py.

◆ applyOverrides()

|

inherited |

A hook to allow a task to change the values of its config *after*

the camera-specific overrides are loaded but before any command-line

overrides are applied.

Parameters

----------

config : instance of task's ``ConfigClass``

Task configuration.

Notes

-----

This is necessary in some cases because the camera-specific overrides

may retarget subtasks, wiping out changes made in

ConfigClass.setDefaults. See LSST Trac ticket #2282 for more

discussion.

.. warning::

This is called by CmdLineTask.parseAndRun; other ways of

constructing a config will not apply these overrides.

Reimplemented in lsst.pipe.drivers.constructCalibs.FringeTask, lsst.pipe.drivers.constructCalibs.FlatTask, lsst.pipe.drivers.constructCalibs.DarkTask, and lsst.pipe.drivers.constructCalibs.BiasTask.

Definition at line 587 of file cmdLineTask.py.

◆ batchCommand()

|

inherited |

Return command to run CmdLineTask.

@param cls: Class

@param args: Parsed batch job arguments (from BatchArgumentParser)

Definition at line 476 of file parallel.py.

◆ batchWallTime()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.batchWallTime | ( | cls, | |

| time, | |||

| parsedCmd, | |||

| numCpus | |||

| ) |

Return walltime request for batch job.

@param time: Requested time per iteration

@param parsedCmd: Results of argument parsing

@param numCores: Number of cores

Reimplemented from lsst.ctrl.pool.parallel.BatchCmdLineTask.

Definition at line 165 of file multiBandDriver.py.

◆ emptyMetadata()

|

inherited |

Empty (clear) the metadata for this Task and all sub-Tasks.

Definition at line 166 of file task.py.

◆ getAllSchemaCatalogs()

|

inherited |

Get schema catalogs for all tasks in the hierarchy, combining the

results into a single dict.

Returns

-------

schemacatalogs : `dict`

Keys are butler dataset type, values are a empty catalog (an

instance of the appropriate `lsst.afw.table` Catalog type) for all

tasks in the hierarchy, from the top-level task down

through all subtasks.

Notes

-----

This method may be called on any task in the hierarchy; it will return

the same answer, regardless.

The default implementation should always suffice. If your subtask uses

schemas the override `Task.getSchemaCatalogs`, not this method.

Definition at line 204 of file task.py.

◆ getFullMetadata()

|

inherited |

Get metadata for all tasks.

Returns

-------

metadata : `lsst.daf.base.PropertySet`

The `~lsst.daf.base.PropertySet` keys are the full task name.

Values are metadata for the top-level task and all subtasks,

sub-subtasks, etc.

Notes

-----

The returned metadata includes timing information (if

``@timer.timeMethod`` is used) and any metadata set by the task. The

name of each item consists of the full task name with ``.`` replaced

by ``:``, followed by ``.`` and the name of the item, e.g.::

topLevelTaskName:subtaskName:subsubtaskName.itemName

using ``:`` in the full task name disambiguates the rare situation

that a task has a subtask and a metadata item with the same name.

Definition at line 229 of file task.py.

◆ getFullName()

|

inherited |

Get the task name as a hierarchical name including parent task

names.

Returns

-------

fullName : `str`

The full name consists of the name of the parent task and each

subtask separated by periods. For example:

- The full name of top-level task "top" is simply "top".

- The full name of subtask "sub" of top-level task "top" is

"top.sub".

- The full name of subtask "sub2" of subtask "sub" of top-level

task "top" is "top.sub.sub2".

Definition at line 256 of file task.py.

◆ getName()

|

inherited |

Get the name of the task.

Returns

-------

taskName : `str`

Name of the task.

See also

--------

getFullName

Definition at line 274 of file task.py.

◆ getSchemaCatalogs()

|

inherited |

Get the schemas generated by this task.

Returns

-------

schemaCatalogs : `dict`

Keys are butler dataset type, values are an empty catalog (an

instance of the appropriate `lsst.afw.table` Catalog type) for

this task.

Notes

-----

.. warning::

Subclasses that use schemas must override this method. The default

implementation returns an empty dict.

This method may be called at any time after the Task is constructed,

which means that all task schemas should be computed at construction

time, *not* when data is actually processed. This reflects the

philosophy that the schema should not depend on the data.

Returning catalogs rather than just schemas allows us to save e.g.

slots for SourceCatalog as well.

See also

--------

Task.getAllSchemaCatalogs

Definition at line 172 of file task.py.

◆ getTaskDict()

|

inherited |

Get a dictionary of all tasks as a shallow copy.

Returns

-------

taskDict : `dict`

Dictionary containing full task name: task object for the top-level

task and all subtasks, sub-subtasks, etc.

Definition at line 288 of file task.py.

◆ logOperation()

|

inherited |

Provide a context manager for logging an operation.

@param operation: description of operation (string)

@param catch: Catch all exceptions?

@param trace: Log a traceback of caught exception?

Note that if 'catch' is True, all exceptions are swallowed, but there may

be other side-effects such as undefined variables.

Definition at line 502 of file parallel.py.

◆ makeField()

|

inherited |

Make a `lsst.pex.config.ConfigurableField` for this task.

Parameters

----------

doc : `str`

Help text for the field.

Returns

-------

configurableField : `lsst.pex.config.ConfigurableField`

A `~ConfigurableField` for this task.

Examples

--------

Provides a convenient way to specify this task is a subtask of another

task.

Here is an example of use:

.. code-block:: python

class OtherTaskConfig(lsst.pex.config.Config):

aSubtask = ATaskClass.makeField("brief description of task")

Definition at line 359 of file task.py.

◆ makeSubtask()

|

inherited |

Create a subtask as a new instance as the ``name`` attribute of this

task.

Parameters

----------

name : `str`

Brief name of the subtask.

keyArgs

Extra keyword arguments used to construct the task. The following

arguments are automatically provided and cannot be overridden:

- "config".

- "parentTask".

Notes

-----

The subtask must be defined by ``Task.config.name``, an instance of

`~lsst.pex.config.ConfigurableField` or

`~lsst.pex.config.RegistryField`.

Definition at line 299 of file task.py.

◆ parseAndRun() [1/2]

|

inherited |

Run with a MPI process pool

Definition at line 534 of file parallel.py.

◆ parseAndRun() [2/2]

|

inherited |

Parse an argument list and run the command.

Parameters

----------

args : `list`, optional

List of command-line arguments; if `None` use `sys.argv`.

config : `lsst.pex.config.Config`-type, optional

Config for task. If `None` use `Task.ConfigClass`.

log : `lsst.log.Log`-type, optional

Log. If `None` use the default log.

doReturnResults : `bool`, optional

If `True`, return the results of this task. Default is `False`.

This is only intended for unit tests and similar use. It can

easily exhaust memory (if the task returns enough data and you

call it enough times) and it will fail when using multiprocessing

if the returned data cannot be pickled.

Returns

-------

struct : `lsst.pipe.base.Struct`

Fields are:

``argumentParser``

the argument parser (`lsst.pipe.base.ArgumentParser`).

``parsedCmd``

the parsed command returned by the argument parser's

`~lsst.pipe.base.ArgumentParser.parse_args` method

(`argparse.Namespace`).

``taskRunner``

the task runner used to run the task (an instance of

`Task.RunnerClass`).

``resultList``

results returned by the task runner's ``run`` method, one entry

per invocation (`list`). This will typically be a list of

`Struct`, each containing at least an ``exitStatus`` integer

(0 or 1); see `Task.RunnerClass` (`TaskRunner` by default) for

more details.

Notes

-----

Calling this method with no arguments specified is the standard way to

run a command-line task from the command-line. For an example see

``pipe_tasks`` ``bin/makeSkyMap.py`` or almost any other file in that

directory.

If one or more of the dataIds fails then this routine will exit (with

a status giving the number of failed dataIds) rather than returning

this struct; this behaviour can be overridden by specifying the

``--noExit`` command-line option.

Definition at line 612 of file cmdLineTask.py.

◆ parseAndSubmit()

|

inherited |

Definition at line 435 of file parallel.py.

◆ runDataRef()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runDataRef | ( | self, | |

| patchRefList | |||

| ) |

Run multiband processing on coadds.

Only the master node runs this method.

No real MPI communication (scatter/gather) takes place: all I/O goes

through the disk. We want the intermediate stages on disk, and the

component Tasks are implemented around this, so we just follow suit.

@param patchRefList: Data references to run measurement

Definition at line 178 of file multiBandDriver.py.

◆ runDeblendMerged()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runDeblendMerged | ( | self, | |

| cache, | |||

| dataIdList | |||

| ) |

Run the deblender on a list of dataId's

Only slave nodes execute this method.

Parameters

----------

cache: Pool cache

Pool cache with butler.

dataIdList: list

Data identifier for patch in each band.

Returns

-------

result: bool

whether the patch requires reprocessing.

Definition at line 349 of file multiBandDriver.py.

◆ runDetection()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runDetection | ( | self, | |

| cache, | |||

| patchRef | |||

| ) |

Run detection on a patch.

Only slave nodes execute this method. @param cache: Pool cache, containing butler @param patchRef: Patch on which to do detection

Definition at line 314 of file multiBandDriver.py.

◆ runForcedPhot()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runForcedPhot | ( | self, | |

| cache, | |||

| dataId | |||

| ) |

Run forced photometry on a patch for a single filter.

Only slave nodes execute this method.

@param cache: Pool cache, with butler

@param dataId: Data identifier for patch

Definition at line 448 of file multiBandDriver.py.

◆ runMeasurements()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runMeasurements | ( | self, | |

| cache, | |||

| dataId | |||

| ) |

Run measurement on a patch for a single filter

Only slave nodes execute this method.

Parameters

----------

cache: Pool cache

Pool cache, with butler

dataId: dataRef

Data identifier for patch

Definition at line 408 of file multiBandDriver.py.

◆ runMergeDetections()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runMergeDetections | ( | self, | |

| cache, | |||

| dataIdList | |||

| ) |

Run detection merging on a patch.

Only slave nodes execute this method.

@param cache: Pool cache, containing butler

@param dataIdList: List of data identifiers for the patch in different filters

Definition at line 331 of file multiBandDriver.py.

◆ runMergeMeasurements()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.runMergeMeasurements | ( | self, | |

| cache, | |||

| dataIdList | |||

| ) |

Run measurement merging on a patch.

Only slave nodes execute this method.

@param cache: Pool cache, containing butler

@param dataIdList: List of data identifiers for the patch in different filters

Definition at line 429 of file multiBandDriver.py.

◆ timer()

|

inherited |

Context manager to log performance data for an arbitrary block of

code.

Parameters

----------

name : `str`

Name of code being timed; data will be logged using item name:

``Start`` and ``End``.

logLevel

A `lsst.log` level constant.

Examples

--------

Creating a timer context:

.. code-block:: python

with self.timer("someCodeToTime"):

pass # code to time

See also

--------

timer.logInfo

Definition at line 327 of file task.py.

◆ writeConfig()

|

inherited |

Write the configuration used for processing the data, or check that

an existing one is equal to the new one if present.

Parameters

----------

butler : `lsst.daf.persistence.Butler`

Data butler used to write the config. The config is written to

dataset type `CmdLineTask._getConfigName`.

clobber : `bool`, optional

A boolean flag that controls what happens if a config already has

been saved:

- `True`: overwrite or rename the existing config, depending on

``doBackup``.

- `False`: raise `TaskError` if this config does not match the

existing config.

doBackup : `bool`, optional

Set to `True` to backup the config files if clobbering.

Definition at line 727 of file cmdLineTask.py.

◆ writeMetadata()

| def lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.writeMetadata | ( | self, | |

| dataRef | |||

| ) |

We don't collect any metadata, so skip

Reimplemented from lsst.pipe.base.cmdLineTask.CmdLineTask.

Definition at line 466 of file multiBandDriver.py.

◆ writePackageVersions()

|

inherited |

Compare and write package versions.

Parameters

----------

butler : `lsst.daf.persistence.Butler`

Data butler used to read/write the package versions.

clobber : `bool`, optional

A boolean flag that controls what happens if versions already have

been saved:

- `True`: overwrite or rename the existing version info, depending

on ``doBackup``.

- `False`: raise `TaskError` if this version info does not match

the existing.

doBackup : `bool`, optional

If `True` and clobbering, old package version files are backed up.

dataset : `str`, optional

Name of dataset to read/write.

Raises

------

TaskError

Raised if there is a version mismatch with current and persisted

lists of package versions.

Notes

-----

Note that this operation is subject to a race condition.

Definition at line 829 of file cmdLineTask.py.

◆ writeSchemas()

|

inherited |

Write the schemas returned by

`lsst.pipe.base.Task.getAllSchemaCatalogs`.

Parameters

----------

butler : `lsst.daf.persistence.Butler`

Data butler used to write the schema. Each schema is written to the

dataset type specified as the key in the dict returned by

`~lsst.pipe.base.Task.getAllSchemaCatalogs`.

clobber : `bool`, optional

A boolean flag that controls what happens if a schema already has

been saved:

- `True`: overwrite or rename the existing schema, depending on

``doBackup``.

- `False`: raise `TaskError` if this schema does not match the

existing schema.

doBackup : `bool`, optional

Set to `True` to backup the schema files if clobbering.

Notes

-----

If ``clobber`` is `False` and an existing schema does not match a

current schema, then some schemas may have been saved successfully

and others may not, and there is no easy way to tell which is which.

Definition at line 771 of file cmdLineTask.py.

Member Data Documentation

◆ butler

| lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.butler |

Definition at line 115 of file multiBandDriver.py.

◆ canMultiprocess

|

staticinherited |

Definition at line 584 of file cmdLineTask.py.

◆ coaddType

| lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.coaddType |

Definition at line 144 of file multiBandDriver.py.

◆ config

◆ ConfigClass

|

static |

Definition at line 97 of file multiBandDriver.py.

◆ deblenderOutput

| lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.deblenderOutput |

Definition at line 122 of file multiBandDriver.py.

◆ log

◆ measurementInput

| lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.measurementInput |

Definition at line 121 of file multiBandDriver.py.

◆ metadata

◆ reuse

| lsst.pipe.drivers.multiBandDriver.MultiBandDriverTask.reuse |

Definition at line 116 of file multiBandDriver.py.

◆ RunnerClass

|

static |

Definition at line 99 of file multiBandDriver.py.

The documentation for this class was generated from the following file:

- /j/snowflake/release/lsstsw/stack/0.4.3/Linux64/pipe_drivers/21.0.0-5-gd00fb1e+05fce91b99/python/lsst/pipe/drivers/multiBandDriver.py